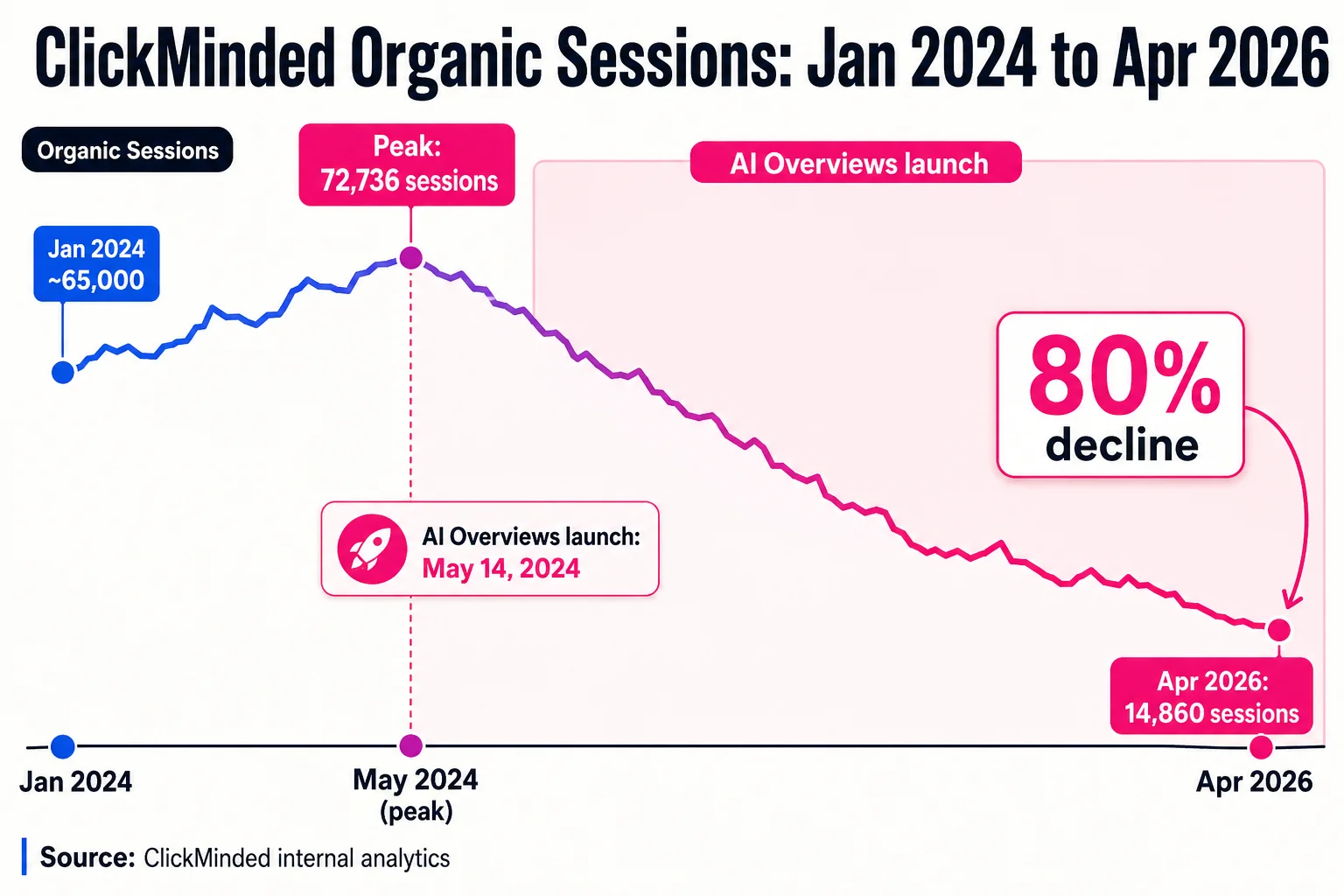

Our organic traffic peaked at 72,736 sessions in May 2024. By April 2026, it was 14,860. That’s an 80% decline on a steady, unambiguous curve that tracks almost exactly with Google’s rollout of AI Overviews.

We’re not telling you this to be dramatic. We’re telling you this because most SEO strategy guides in 2026 are still selling you the 2021 playbook with an “AI” sticker on the cover, and we’d rather start with what actually happened to us.

The uncomfortable reality is that Google launched AI Overviews on May 14, 2024, and by Q1 2026, those overviews were appearing on more than 30% of all queries. Seer Interactive’s analysis of 3,119 informational queries found organic CTR dropped 61% where AI Overviews appear. Zero-click searches hit roughly 65% of all Google queries in Q1 2026. Our own Search Console data shows the same pattern from a different angle: between January and August 2025, our search impressions grew 48% while clicks fell 55%. More visibility, fewer visits.

That is the actual environment you’re building an SEO strategy for.

This guide won’t pretend that’s fine, and it won’t pretend SEO is dead either. What it will do is give you an 8-step framework built for the search environment that exists right now, not the one that existed three years ago. (SEO is one channel inside a larger system — for the full multi-channel frame this guide sits inside, see our digital marketing strategy guide.)

Who this is for. This is an seo strategy guide for three kinds of people: marketers and founders who know SEO matters but don’t have a coherent plan that accounts for AI search; working SEOs who’ve been running tactics in isolation and want to systematize; and anyone who watched their traffic chart go sideways in 2024 and has been asking whether any of this is still worth doing.

It’s also written to work as an seo strategy for beginners, meaning we’ll explain the reasoning behind each step, not just the mechanical checklist. If you already know why SERP analysis matters, you can skim the framing and focus on the process.

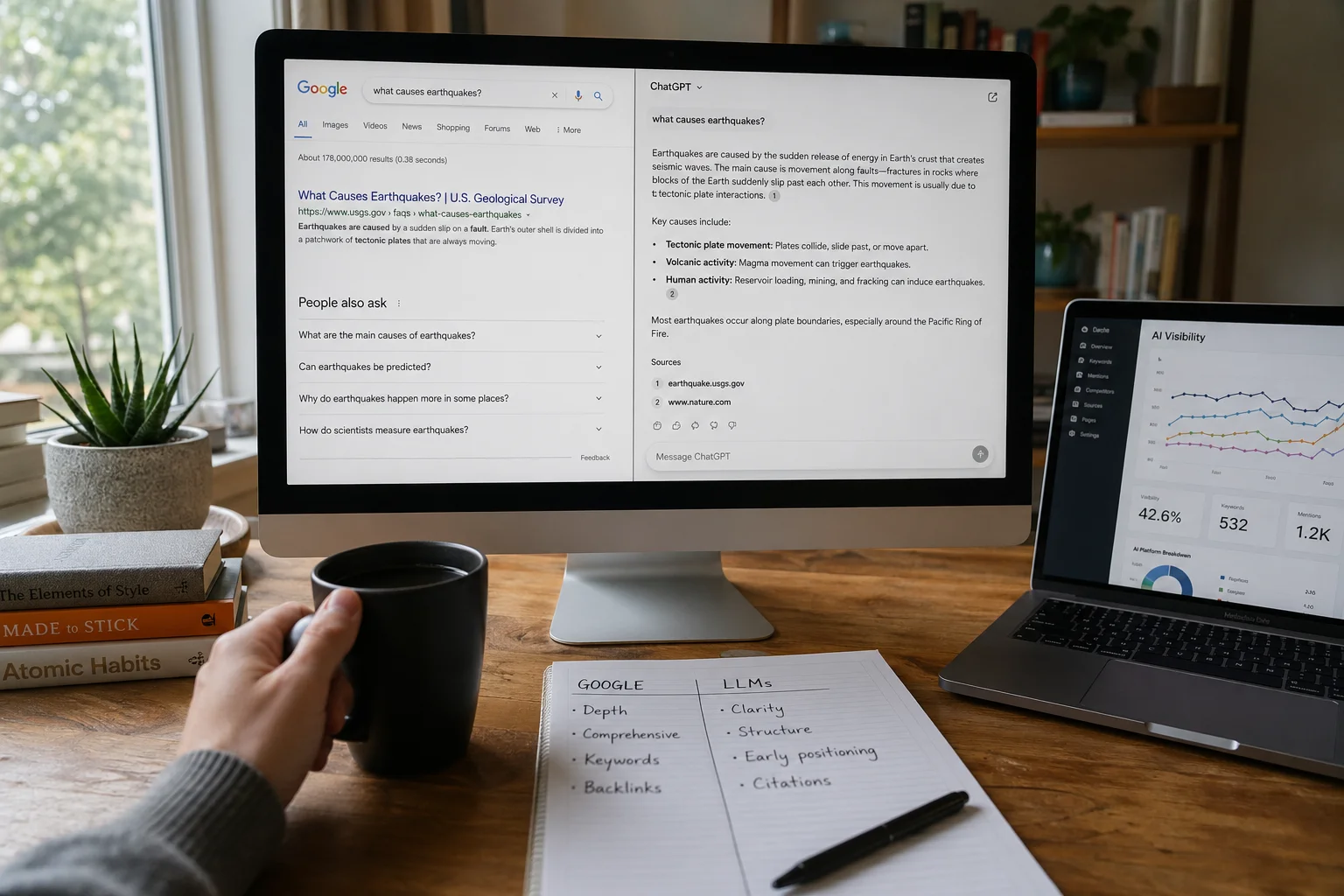

The seo strategy 2026 reality is that you’re no longer optimizing for a single search box. Google is one surface. Reddit, YouTube, and ChatGPT are others, and a meaningful share of your potential audience is already using them as primary search tools. The framework below accounts for all of that.

The 8 steps. Here’s the structure of this guide:

- Define your SEO goals and the right metrics to track them

- Find topics worth ranking for (keyword research that works in 2026)

- Analyze the SERP before you write a single word

- Create content that wins in the current environment

- On-page SEO: the fundamentals that still move rankings

- Authority building: how Google and LLMs actually decide who to trust

- AI search optimization: getting cited in AI Overviews and LLM responses

- Content updates: the highest-ROI activity most teams are underinvesting in

Steps 7 and 8 are where most competitor guides either go vague or skip entirely. We’ll go deep on both.

Before you work through the steps, grab the template below. It’s the same worksheet the ClickMinded team uses, and it gives you somewhere to put the outputs from each step as you go.

One more thing before we start. We said the traffic decline tracks with AI Overviews, and that’s true, but the honest version is slightly more complicated. Not every query type is hit equally. Informational queries are where the damage is worst. Navigational and transactional queries are more insulated. Some of our pages that rank for specific tool-based queries (our button generator, for example, which drove 84,412 sessions in the last 12 months) have been far more resilient than our editorial content. That pattern shapes the whole framework.

The goal of this guide isn’t to tell you SEO is fine. The goal is to show you where it still works, what’s changed structurally, and exactly what to do about it.

Three things broke your SEO playbook, and most guides are pretending otherwise

Most “SEO strategy 2026” guides you’ll find right now are recycled 2022 content with a paragraph about ChatGPT dropped somewhere in the middle. They’ll tell you to do keyword research, build links, optimize your title tags, and maybe “consider AI Overviews.” Then they’ll move on as if the last two years didn’t happen.

That’s not a strategy. That’s a comfort blanket.

Three structural shifts changed how search actually works. If your current approach doesn’t account for all three, you’re optimizing for a search environment that no longer exists.

Shift 1: AI Overviews removed the click reward for ranking

Before May 2024, ranking in the top three on Google meant traffic. That relationship broke.

Seer Interactive’s analysis of over 3,000 informational queries found organic CTR dropped 61% where AI Overviews appear. Our own Search Console data shows the same pattern from a different angle: between January and August 2025, our search impressions grew 48% while our clicks fell 55%. Our CTR hit an all-time low of 0.59% in August 2025.

Read that chart back: more visibility, half the traffic.

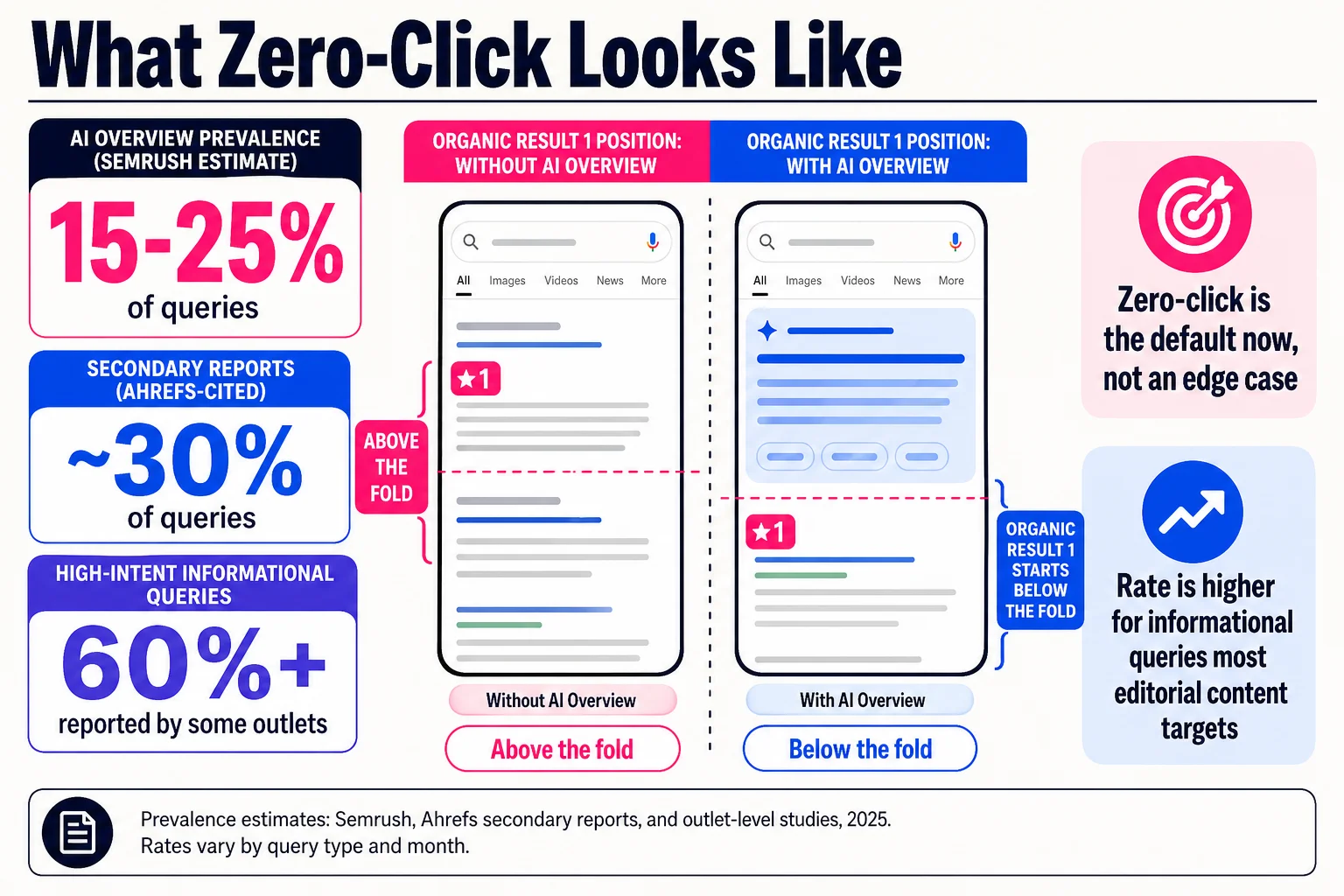

AI Overview prevalence estimates vary depending on who’s measuring and when. Semrush’s study put it at around 15-25% of queries depending on the month. Secondary reports citing Ahrefs data suggest it may reach 30% of queries across a broad sample. Some outlets have reported rates above 60% for certain query types. The honest answer is that the exact number shifts constantly, and the rate is higher for the informational queries most editorial content targets.

What doesn’t shift: zero-click is the default now for a large share of searches, not an edge case.

Shift 2: Google is one search surface, not the only one

A meaningful share of your audience already isn’t starting on Google.

An Axios analysis of Profound data found Reddit was one of the most cited sources across major AI platforms, with citation share varying dramatically by platform: around 23% in Perplexity, 5.5% in Google AI Overviews, and roughly 1.2% in ChatGPT. People are cross-referencing these platforms before making decisions.

This matters for SEO strategy in a specific way. The old model was: rank on Google, get traffic. The current model is: show up across surfaces where your audience actually looks, which includes Reddit threads, YouTube results, ChatGPT responses, and AI Overviews. A page that exists only as a Google ranking asset is now a partial asset.

The volume-at-scale content playbook accelerated this problem. Sites that published hundreds of thin, keyword-targeted posts trained Google’s quality systems to distrust them at the site level, and trained users to route around them entirely in favor of Reddit or YouTube where actual practitioners talk. Google’s August through December 2025 core updates specifically targeted synthetic content patterns and manipulative signals.

The answer isn’t to abandon Google. The answer is to stop treating it as the only place that matters.

Shift 3: Traffic concentration got more extreme, and most sites ignored it

The Pareto pattern in SEO has always existed. A small share of pages drives most of the value. What changed is how extreme that concentration became as lower-quality pages got devalued.

Our own numbers illustrate this. Out of 5,917 indexed pages, five pages drive 93% of our organic traffic. One page, our button generator, pulled 84,412 sessions in the last 12 months, more than the next four pages combined.

That’s not a content strategy success. That’s a warning sign disguised as a metric. If five pages hold up your entire SEO presence, the risk profile of your site is enormous.

The lesson isn’t to build more pages. The lesson is that most content teams are still spreading effort across dozens of low-value targets while their highest-potential pages get maintenance-level attention. The right strategy concentrates resources on pages that can actually win, and keeps them winning.

The guides that are still treating SEO as a content volume game, a link quantity game, or a keyword density game are describing a tactic set that Google and users have both moved past. The rest of this guide is built around what replaced it.

SEO is now a system for getting found everywhere your audience looks

If your definition of SEO is “rank on Google, get traffic,” the last two years broke that definition. What replaced it is messier, more expensive to execute, and also more defensible if you do it right.

The working definition we use at ClickMinded: SEO strategy is a system for earning discovery across every surface where your audience actually searches, then converting that visibility into something that has value for your business. Google is still the biggest surface. But it is one of several.

When someone wants to know whether a SaaS tool is worth trying, they might start on Google. They also might search Reddit for real user experiences, ask ChatGPT for a comparison, watch a YouTube teardown, or check Perplexity for a structured summary. Your content either shows up across those surfaces or it doesn’t. A strategy that optimizes only for Google organic listings is now a partial strategy.

This doesn’t mean you split your effort evenly across every platform. Search Engine Land’s 2026 SEO strategy framework suggests roughly 60-70% of effort toward Google (content updates, structured data, and high-intent pages), around 20% on site maintenance and technical health, and the remaining 10% on non-Google surfaces like Reddit, YouTube, and review sites. That allocation will vary by industry and audience, and the 10% non-Google figure comes from a single practitioner piece rather than a controlled study, so treat it as a rough starting point rather than a fixed rule. The underlying point stands: non-Google surfaces deserve deliberate investment, even if Google still gets the majority of your attention.

What that means practically: you pick topics that work across formats, not just keyword queries. You build pages that give AI systems something clear to cite. You participate in the communities where your audience talks, rather than only publishing content they might eventually discover.

The 8 steps in this guide are organized around four underlying pillars that make a strategy coherent instead of just a checklist:

Goals (Steps 1): What business result does this SEO work need to produce, and how will you measure it given that clicks are no longer a reliable proxy for impact?

Topics (Steps 2-3): Which queries are worth ranking for, across which surfaces, and what does the current search environment look like before you commit resources to a page?

Content (Steps 4-5): How do you create and structure content that wins in organic results, earns AI Overview citations, and holds up when someone cross-references it on Reddit or ChatGPT?

Authority (Steps 6-8): How do you build the kind of credibility that both Google and LLMs use to decide whose content gets surfaced, then maintain that credibility as content ages?

These pillars aren’t new concepts. What changed is how each one executes. Building authority no longer means collecting backlinks from directories. Choosing topics no longer means targeting keyword volume with no attention to SERP intent or AI Overview prevalence. Creating content no longer means matching a word count to whatever’s ranking.

The 8 steps that follow go through each pillar in order, with a concrete process and specific outputs for each one.

The first four steps are where most SEO strategies quietly fail

Most SEO plans skip straight to content production and call that strategy. The result is articles targeting the wrong goals, measuring the wrong numbers, and competing on SERPs they had no business entering. The four steps below fix that before you write a single word.

Step 1: Define goals that survive zero-click search

The KPI you pick shapes every decision that follows. Domain authority and total keywords ranking are the two most common choices, and both are wrong for 2026. Domain authority is a third-party estimate with no direct relationship to revenue. Total keywords ranking tells you nothing about whether those keywords matter or whether anyone clicked.

Track these instead:

Branded vs. non-branded traffic split: non-branded growth means your keyword reach is expanding; rising branded search means people are looking for you specifically. You want both moving, and you want to know which one and why.

Share of voice across topics: how often your content appears across ranking positions, snippets, and AI Overview citations for a topic cluster relative to competitors. It’s a better proxy for competitive position than any single rank.

Assisted conversions from organic: requires a working GA4 attribution model, but it’s the only metric that connects SEO visibility to pipeline. Track sessions that touched organic at any point in the conversion path, not just last-click.

Organic conversion rate: a page sending 500 sessions at 4% conversion beats a page sending 5,000 that bounce.

Set your KPIs before you build the topic list. They determine which topics are worth targeting and what “winning” looks like per page.

Output: A one-page KPI document with 3-5 metrics, the tool tracking each one, and a target range tied to a specific business goal. Start with our marketing strategy generator if you need a framework connecting these to broader objectives.

Step 2: Build a topic list from how people actually talk

Keyword research in 2026 starts somewhere most tools can’t see: the language your customers use before they ever type a query into Google.

The process has four stages. First, mine Reddit. Search your topic and read the actual question threads. The phrasing people use there — “I’ve tried X and it still doesn’t work,” “what’s the difference between X and Y when you’re doing Z” — is often closer to real search intent than any keyword tool’s top suggestion. KeywordInsights.ai has documented that Reddit surfaces conversational, high-intent queries that volume-based tools miss.

Second, pull language from sales calls and support tickets. Scan call recordings or your support inbox for the exact phrases prospects use to describe their problem. These frequently match the queries they searched before finding you.

Third, use ChatGPT as an audience proxy. Give it a persona matching your target customer and ask what questions that person would have at each decision stage. Use this for ideation only — ChatGPT generates plausible-sounding queries, but it lacks the accuracy needed for direct research and needs validation against real search data.

Fourth, cluster and score. Group raw queries by intent (informational, commercial, transactional) and run them through a keyword tool for volume, difficulty, and SERP feature prevalence. Flag any query where an AI Overview already dominates — click-through on those is poor.

Output: A spreadsheet with 50-100 topic clusters, each tagged by intent, estimated volume, SERP feature flags, and whether existing content already competes for that cluster. Use our keyword planner to pull the volume and difficulty data.

Step 3: Analyze the SERP before you write anything

Ranking for a keyword and earning traffic from it are different things. Before committing to a page, spend 20 minutes on a six-part SERP analysis.

Compile the full keyword set for the topic. Document every SERP feature present: AI Overview, featured snippet, People Also Ask, video carousel, local pack. Identify the dominant format — listicle, step-by-step guide, comparison table, video. Decode the intent by reading the top three results, not just their titles. Analyze the top three pages for gaps you could fill. Then assess feasibility honestly: if positions 1-5 are occupied by high-authority publications with thousands of backlinks and fresh content, that’s a bad target for a new page.

SERP volatility is worth tracking at the topic level too. A cluster where the top result changes every few weeks means Google is still sorting out what belongs there. That instability can work for you or against you.

Output: A completed SERP brief for each target page: dominant format, intent confirmed, content gaps identified, AI Overview presence noted, and a go/no-go decision.

Step 4: Create content that can actually prove what it claims

The biggest reason content fails E-E-A-T signals isn’t thin copy or missing keywords. It’s the absence of first-party evidence. A page about running Facebook ads with no screenshots, no real account data, no specific numbers, and no named author with verifiable experience tells Google — and increasingly AI Overview systems — very little about whether it’s trustworthy.

First-party evidence means screenshots from your actual accounts, data from real campaigns, quotes attributed to named and verifiable people, and an author bio with credentials that match the topic. This guide cites ClickMinded’s own GSC data with exact figures because invented statistics are both dishonest and increasingly detectable.

Beyond evidence, match your format to what the SERP rewards. Step-by-step guides win on procedural queries. Comparison pages win on “X vs Y.” Original research wins for statistics queries. Build using our blog post generator to ensure structure matches the format your SERP analysis identified.

Output: A published page with a named author, verifiable first-party evidence, a format matched to SERP intent, and at least one data point no competitor can copy without doing the same work.

Once you have the content, these two steps determine whether it actually ranks

Step 5: On-page SEO as clarity, not code tricks

On-page SEO has a reputation problem. Mention it and most people picture keyword density calculators, title tag character counts, and someone arguing about whether H2s should include the exact-match phrase. That’s not what moves rankings in 2026.

What actually moves rankings is whether Google’s systems can understand your page clearly and whether a reader can get what they need without working for it. Those two things are almost the same thing.

The practical checklist is shorter than most guides make it:

Your title tag and H1 should match the search intent, not just the keyword. A title that reads like a human wrote it for a human outperforms exact-match stuffing every time now.

Your heading hierarchy should tell the story of the page. H1 introduces the topic. H2s cover the main subtopics. H3s handle specific points within each. If someone read only your headings, they should understand what the page covers. This also matters for AI Overview extraction: AI systems parse heading structure to pull quoted passages, so a clear hierarchy directly increases your chance of being cited.

Semantic entities belong in the copy. Not keywords repeated ten times, but related concepts, named examples, and specific terminology that confirms topical depth. A page about on-page SEO that never mentions schema markup, internal linking, or search intent reads as shallow to both Google’s NLP models and LLM retrieval systems.

JSON-LD schema is the single most underused on-page element. Roughly 31% of websites have any structured data at all, which means if you implement Article, FAQ, HowTo, or Breadcrumb schema correctly, you are already ahead of most competitors. The CTR lift from rich results is real, though the exact percentages you’ll see cited (20-30%) come from marketing analyses rather than controlled studies, so treat those numbers as directional.

Internal links should be descriptive. “Click here” tells Google nothing. “How to run a SERP analysis” tells Google exactly what the destination page covers and why this page considers it relevant. Use anchor text that describes the destination topic.

Images need alt text that describes the image content, not the keyword you want to rank for.

That’s the list. None of it is complicated. The reason pages fail on-page is usually neglect, not misunderstanding.

Output: A page where every element, title, headings, body copy, schema, internal links, answers the same question: what is this page about, and why should someone trust it?

Check our SEO checklist to verify you haven’t missed anything before publishing.

Step 6: Authority building for LLMs and Google simultaneously

The old model was simple: get links from other websites, rank higher. The new model is more interesting, and the tactics are different enough that most advice written before 2024 is worth ignoring.

The core reframe is this: you are not building links. You are building your entity’s footprint across sources that both Google and LLMs use to assess credibility.

LLMs operate on two citation mechanisms that are worth understanding separately. The first is training-time association: when a model was trained, pages that were published early on a topic and cited frequently across the web built stronger parametric associations for that topic. Being the first credible source on a topic still pays dividends in model outputs even when the model isn’t retrieving live data.

The second mechanism is real-time retrieval. For fresh, niche, or high-stakes queries, LLMs pull live sources and select them using authority signals that resemble a knowledge graph: well-structured pages, citations from trusted domains, schema-defined organizational identity. This is where your entity schema matters. An Organization schema with a verified logo, consistent NAP data, and links to social profiles tells both Google and retrieval-enabled LLMs that your site has a defined identity, not just content floating without context.

Practically, this means three things.

Pursue placements that matter. That means original data studies that journalists cite, quotes in industry publications, podcast appearances that get show notes with links, and product features in legitimate comparison roundups. What it does not mean is paying $300 for a guest post slot on a site that exists only to sell guest post slots. Google has been deprioritizing those for years, and LLMs never trusted them.

Build schema site-wide. Every page should inherit your Organization schema. Category pages and guides should carry Article or HowTo markup. FAQ schema on Q&A sections. The goal is that any system parsing your site, whether it’s Googlebot or an LLM retrieval agent, encounters clean, structured identity signals everywhere.

Track where you appear. Set up prompt-based citation tests in ChatGPT and Perplexity for your target queries. Ask the models who they’d recommend for your topic category. If your site doesn’t come up, you have a citation gap, and you can usually trace it to either a missing entity signal or the absence of trusted third-party mentions.

Output: An entity schema deployed site-wide, at least three PR or citation placements secured from sources a journalist would recognize, and a quarterly prompt test to verify LLM visibility.

The two steps almost every SEO guide skips entirely

Step 7: Optimizing for AI Overviews and LLM search

Most SEO guides end at authority building. This one doesn’t, because that’s where most of the opportunity actually sits right now.

The honest framing first: AI Overviews have taken real traffic from real sites, including ours. Between January and August 2025, our search impressions grew 48% while clicks fell 55%, hitting a CTR low of 0.59% in August. More people saw our content. Fewer clicked through. That’s the zero-click reality, and pretending optimization for AI surfaces is pure upside would be dishonest.

That said, AI citation is now a meaningful distribution channel on its own, separate from click-through. When ChatGPT or Perplexity recommends your site by name in response to a category-level query, that’s brand exposure that compounds. The question is how to get cited.

The answer is less mysterious than the tool vendors make it seem. SurferSEO’s AI Citation Report found that roughly 55% of AI Overview citations come from the first 30% of a page’s content, with the bottom 40% accounting for only about 21%. Put your direct answer at the top of the page, not buried in paragraph seven after three paragraphs of preamble. This is the single highest-leverage structural change most pages can make.

The domains that AI Overviews cite most heavily are high-trust, structured, and authoritative: YouTube at around 23.3%, Wikipedia at 18.4%, Google.com at 16.4% per that same SurferSEO analysis. Overlap between AI Overview citations and the traditional top-10 results has dropped to roughly 38%, which means ranking position alone no longer predicts whether you get cited. Structure and entity authority do.

Practically, this is what to do:

Write a direct answer to the query in the first 200 words of every informational page. Not a teaser, an actual answer. Then support it with depth below.

Add author credentials visibly. A byline with a named author, a short bio, and verifiable experience signals E-E-A-T to both Google’s systems and LLM retrievers. A page attributed to “Staff Writer” carries less weight than one attributed to a named practitioner with a track record.

Use structured data to define what your content answers. FAQ schema on Q&A sections, HowTo schema on process pages, and Article schema with a named author and publication date tell retrieval systems exactly what they’re reading. This is less about Google’s rich results now and more about making your content machine-parseable for any system querying it.

Run prompt tests quarterly. Open ChatGPT, Perplexity, and Gemini. Search for the queries you want to own. If a competitor’s name appears and yours doesn’t, you have a citation gap. Tools like Otterly.AI, FAII Intelligence, and SE Visible automate this monitoring across ChatGPT, Perplexity, Gemini, and Copilot. Worth running at least once per quarter on your top 20 target queries.

One more thing to internalize: AI search and Google search now require somewhat different content postures. Google still rewards depth and comprehensive coverage. LLMs reward clarity, structure, and early positioning of the core answer. A page optimized only for one surface underperforms on the other. The fix is usually structural rather than requiring entirely separate content.

Output: Every key informational page has a direct answer in the first 200 words, visible author credentials, and appropriate schema. You’ve run at least one prompt-test audit and know which queries you appear in and which you don’t.

Step 8: The content update framework (your highest-ROI activity in 2026)

Publishing new content gets most of the attention. Updating existing content gets most of the results.

Backlinko’s “Content Relaunch” case study documented a 260.7% organic traffic increase in 14 days from a single post update. Their Skyscraper Technique 2.0 documented a 652.1% lift in 7 days. Those are outlier numbers, but they illustrate something real: a well-ranked page with decaying traffic and outdated content has an enormous amount of recoverable value compared to a brand-new page starting from zero authority and zero links.

We run our own editorial calendar on this logic. Not because we have a clean before/after number to show you, but because the math of existing link equity plus a targeted refresh is consistently better than the math of a new page with nothing behind it.

The framework has three tiers.

Tier 1: Protect. These are your pages currently cited in AI Overviews or ranking in top 3 for high-value queries. They get reviewed every 90 days, minimum. The goal is not to rewrite them but to verify that facts are still accurate, that examples haven’t aged out, and that structure is still optimized for AI citation (direct answer up front, schema in place, author credible). Small issues compound fast on pages already under scrutiny.

Tier 2: Refresh. Pages that had meaningful traffic, have at least 10 referring domains protecting their link equity, but have seen a significant decline from their peak. The threshold for action: traffic down 50% or more over 6 to 12 months. These get genuine content updates: new data, revised examples, improved heading structure, expanded coverage of subtopics that now rank for the query but weren’t in the original. You’re not rewriting the page from scratch; you’re upgrading it to match what the current SERP rewards.

Tier 3: Consolidate or cut. Thin pages that are cannibalizing each other, ranking for nothing, and diluting crawl budget. The options are merge (301 the weaker page into the stronger one, absorb the content), noindex (if the page has a legitimate use case but no search value), or delete (if it serves no purpose for users or the site). Most sites let this category quietly accumulate for years. Pruning it is unsexy but real.

A practical cadence: review roughly 20 to 30% of your content catalog per quarter. That sounds like a lot, but most pages will be a 10-minute check that results in no action. The ones that need work surface quickly once you’re looking at traffic trends and citation data side by side.

For deciding which pages to prioritize, pull your Google Search Console data filtered by impressions-to-clicks ratio. Pages with high impressions and very low clicks are candidates for Tier 1 and 2 review. Pages with nearly zero impressions and no links are your Tier 3 candidates.

Output: A tiered content audit spreadsheet with every indexed page sorted into Protect, Refresh, or Consolidate. A 90-day update calendar for Tier 1 pages. A defined threshold (we use 50% traffic decline from peak as the Refresh trigger) so decisions aren’t judgment calls every time.

Use our post remixer to accelerate the rewrite process on Tier 2 pages, and check the SEO checklist before republishing any updated content.

The technical foundation, and what we actually use to maintain it

Technical SEO is the one area where this guide will deliberately send you elsewhere. Not because it’s unimportant, but because it’s already covered in exhaustive detail in our SEO checklist. Rebuilding that here would just give you two places to keep updated.

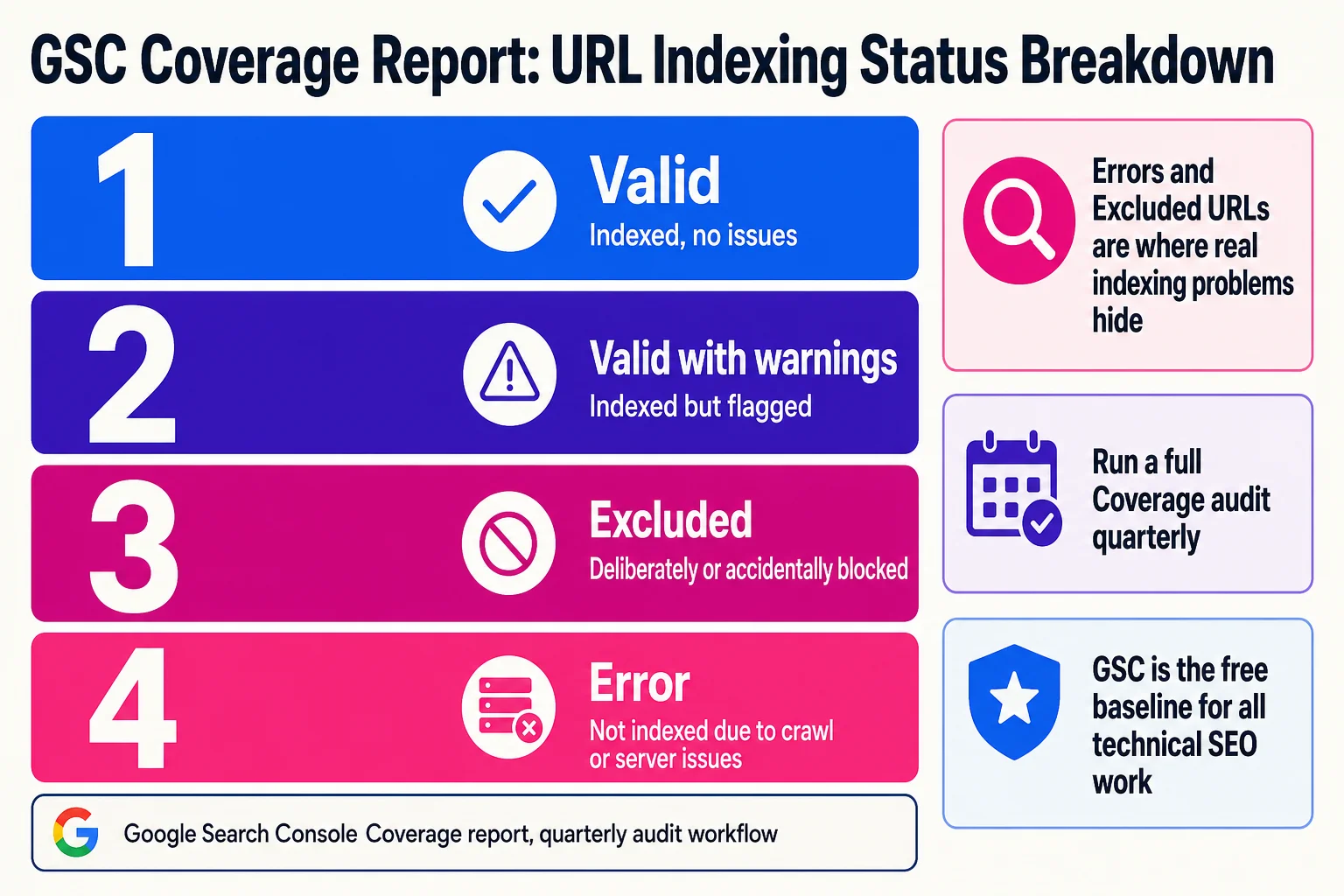

The short version: crawlability and indexing come first. If Google can’t reliably reach your pages, nothing downstream matters. Before you worry about Core Web Vitals, verify your robots.txt isn’t accidentally blocking important pages, your XML sitemap excludes noindexed and redirected URLs, and Google Search Console isn’t surfacing crawl errors or coverage problems you’ve missed.

Once that’s confirmed, Core Web Vitals targets worth hitting are LCP under 2.5 seconds, INP under 200 milliseconds, and CLS under 0.1. The highest-leverage fixes are usually TTFB optimization, converting images to WebP or AVIF, and reserving space for dynamic content so the page doesn’t shift on load.

Run a full audit quarterly. The toolset for that doesn’t need to be expensive.

The tools we use

Start with what’s free. Google Search Console is the baseline for everything: indexing status, Core Web Vitals field data, click and impression trends, and since 2024, an AI-powered Recommendations feature that auto-surfaces prioritized fixes rather than leaving you to find them manually. If you’re not in GSC every week, you’re flying without instruments.

Our own free tools cover a few specific jobs that come up constantly during audits and content work. The button generator and URL trimmer are small utilities but they come up constantly in on-page work. For content production inside the SEO workflow, the blog post generator speeds up first drafts and the post remixer handles Tier 2 content refreshes from Step 8. All free, no account required.

For paid tools, the honest breakdown is this: Screaming Frog is the best purpose-built crawler available. The free tier handles up to 500 URLs, which covers most small sites. The paid license runs around $259 per year and adds scheduled crawls and API access. If you’re auditing anything larger than a few hundred pages regularly, it’s worth it. For backlink analysis specifically, Ahrefs is the strongest option. Semrush covers more ground as an all-in-one platform if you want keyword research, site auditing, and competitor analysis in one place.

Cost-conscious teams can get a long way by combining GSC, Google Analytics 4, and Screaming Frog’s free tier before committing to a paid platform subscription. That stack covers the fundamentals.

One thing worth noting about GSC’s 2024 updates: the AI-powered report configuration rolled out across accounts and meaningfully reduces the time spent setting up performance filters. The Recommendations tab in particular now flags issues by estimated impact, which is a better triage signal than crawling your own gut instinct about what’s broken.

The full technical checklist with implementation details lives at /seo-checklist/.

SEO isn’t dead. The easy version is.

No, SEO is not dead in 2026. Organic search still accounts for roughly 53% of all website traffic. Google still handles over 5 trillion searches per year. What’s dead is the version that required no real strategy: find a keyword, write 1,500 words, build a few links, wait. That stopped working when the results page stopped being a clean list of ten blue links.

Sites that do it well are pulling more qualified traffic than ever. Sites that coasted on old tactics are getting replaced by AI Overviews that don’t need to send anyone anywhere.

We’re not exempt. Our traffic dropped 80% from its May 2024 peak. A guide that pretends otherwise isn’t one you should trust.

What to stop doing in 2026

Chasing position 1 as the primary goal. When an AI Overview sits above all organic results, ranking first in the traditional list changes very little. The goal shifts to being cited in the Overview itself, or winning the clicks that come after it.

Keyword stuffing. Writing “best SEO strategy” seventeen times in a 2,000-word post does not help you rank. Google’s language models understand topic relevance without exact-match repetition, and heavy keyword density now reads as a spam signal.

Private blog networks, low-quality directories, and expired domain schemes. The post-2024 spam updates made the odds much worse.

Over-optimized anchor text profiles. If 60% of your inbound links use the exact keyword you’re targeting, that looks like a link scheme. Natural profiles include branded anchors, naked URLs, partial matches, and generic phrases.

Buying links wholesale. Templated outreach campaigns produce exactly the kind of links that get sites hit by spam updates.

Rewriting content purely to hit tool scores. If an optimization tool tells you to add “furthermore” three times and you do it, the content gets worse. Tool scores are a proxy. Human readability comes first.

Ignoring every search surface that isn’t Google. Reddit threads, YouTube results, and ChatGPT responses are where significant portions of your audience now form opinions and make decisions. Optimizing exclusively for Google organic in 2026 is like optimizing exclusively for desktop in 2015.

Frequently Asked Questions

What is an SEO strategy?

An SEO strategy is a documented plan for choosing which topics to target, creating content that ranks for those topics, building authority signals that help Google trust the site, and maintaining that content as search behavior changes. A strategy is different from a tactic: it connects individual actions to measurable business goals.

Is SEO dead in 2026?

SEO is not dead, but the low-effort version is. AI Overviews appear on a meaningful share of queries and reduce clicks for the organic results below them. Zero-click behavior is rising. But organic search still drives the majority of web traffic, and sites that build genuine authority and create content worth citing continue to grow. The channel is harder and more selective, not gone.

What are the top SEO strategies in 2026?

The highest-ROI activities right now are: updating existing content that already ranks but has declined, building the kind of authority that gets your site cited in AI Overviews and LLM responses, targeting queries where Google still shows a full organic list rather than a generated answer, and distributing content across Reddit, YouTube, and other surfaces where your audience actually searches.

Can I do SEO myself?

Yes. Google Search Console is free and tells you most of what you need to know about your current performance. For most small sites, a founder or marketing generalist can run a real SEO program without an agency, especially in the early stages where the highest-value work is research and content, not technical depth.

If you want to run the 8-step process without rebuilding it from scratch, the template does that work for you.

The marketing strategy generator is a good starting point if you’re still mapping out where SEO fits in the broader picture. The SEO checklist covers the technical foundation. And if you’re ready to start, start here.